I feel that the word intelligent is misleading

I'm a passionate software developer.

Introduction

Conversation

Dialog

Leo: Doug, the more I learn about AI, the more I feel that the word intelligent is misleading.

It behaves intelligently, but I’m not convinced it actually knows anything.

Doug: That’s a very precise observation.

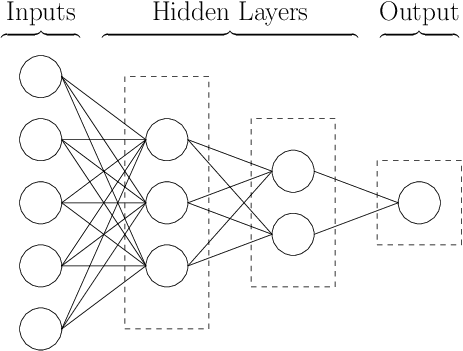

AI doesn’t possess knowledge the way humans do, it builds representations of patterns in data.

Leo: When you say representations, do you mean like a simplified version of reality? Almost like a map instead of the real territory?

Doug: Exactly. AI never sees reality directly; it only sees abstract patterns extracted from examples.

Leo: So when a chatbot answers a question, it’s not retrieving facts. It’s generating a response that statistically fits its internal representation?

Doug: Yes. A chatbot optimizes for likelihood, not truth, it selects the sequence of words that best fits the patterns it learned.

Leo: That explains something I’ve noticed. Sometimes the answer sounds extremely confident, but later I discover it’s wrong.

Doug: That phenomenon has a name. It’s called a hallucination.

Leo: So hallucination doesn’t mean randomness. It means a plausible but false construction?

Doug: Precisely. An AI hallucination is a highly coherent answer that emerges from probability, not verification.

Leo: So confidence is not evidence of correctness. It’s just a side effect of high statistical certainty.

Doug: That’s a very mature distinction. AI can be confidently incorrect because confidence comes from patterns, not understanding.

Leo: Interesting. That feels powerful, but also dangerous.

Vocabulary

| Word/expression | Meaning | Example |

| the more…, the more… | A structure that shows how one thing increases or changes together with another. | The more data an AI sees, the more accurate its predictions become. |

| misleading | Something that seems correct or helpful, but actually gives the wrong idea. | Calling AI “intelligent” can be misleading, because it doesn’t truly understand. |

| likelihood | How probable something is to happen or be correct. | The chatbot chooses the answer with the highest likelihood, not the highest truth. |

| side effect | An unintended result that happens because of something else. | Hallucinations are a side effect of predicting words without understanding meaning. |

| representations of patterns | Internal models that summarize how things usually behave, instead of storing exact facts. | AI stores representations of patterns, not memories like humans do. |

| abstract patterns | Simplified patterns that remove details and focus only on structure or relationships. | The AI learns abstract patterns of language, not the real world itself. |

| hallucination | When an AI produces an answer that sounds correct and detailed, but is false or invented. | The chatbot gave a confident explanation, but it was a hallucination. |

| randomness | Behavior that has no pattern or order. | AI hallucinations are not pure randomness; they follow learned patterns. |

| plausible | Something that sounds reasonable or believable, even if it’s wrong. | The answer was plausible, but no one could verify it. |

| high statistical | Based on strong probability calculated from large amounts of data. | The model chose that answer because it had high statistical support. |

| almost like | Used to compare two things that are similar but not identical. | AI learning is almost like copying behavior without knowing the reason behind it. |

| coherent answer | An answer that is well-structured, logical, and flows smoothly. | The AI produced a coherent answer, even though it was incorrect. |

| retrieving | Finding and bringing back stored information. | Unlike a database, a chatbot is not retrieving facts; it is generating responses. |

| sounds (confident) | Meaning: Appears sure and authoritative, even without certainty. Example: | The explanation sounded confident, but confidence does not guarantee correctness. |

Exercises

Story telling

Role play

Instructions

For this exercise, you will practice using the vocabulary related to this dialog by acting out a role-play scenario.

Include the words provided in the vocabulary section.

Personal experience

Talk about your past experience with the topic.

Homework

Reading comprehension

Why does Leo believe the term “intelligent” is misleading when applied to AI?

How does Doug describe AI’s relationship with knowledge?

What does the “map versus territory” analogy mean in the dialog?

What is an AI hallucination according to the conversation?

What main concern does Leo express at the end of the dialog?

Fill-in-the blanks

Fill in the blanks with the correct word or phrase from the vocabulary list.

__________________ data an AI processes, __________________ accurate its predictions tend to become.

Calling AI “intelligent” can be __________________, because it behaves intelligently without true understanding.

A chatbot does not focus on truth; it selects the response with the highest __________________.

AI systems store __________________ rather than exact memories of past events.

These models rely on __________________, which remove real-world details and focus only on structure.

When an AI gives a detailed but false explanation, this phenomenon is known as a __________________.

An AI hallucination is not pure __________________; it follows learned language patterns.

The response seemed __________________, even though no evidence supported it.

Hallucinations are a __________________ of predicting language without understanding meaning.

The model chose that output because it had __________________ probability support from the data.

AI learning is __________________ imitating behavior without knowing the underlying reasons.

The system produced a __________________ that flowed logically from start to finish.

Unlike a search engine, a chatbot is not __________________ facts from a database.

The explanation __________________, but confidence alone does not guarantee correctness.

Partner role play

Create your own partner role play activity. Make sure to include the words you’ve learned.

Produce

Create Your Own Dialog

Instructions:

Using the new words/expressions you’ve learned.